Choosing the Right AI Approach for Your Business: A Practical Decision Tree

Mar 12, 2026

Picking the right AI solution often gets messy. Some say, "We'll take a ready-made SaaS and forget about it." Others say, "We need a custom solution, there's no other way." Others jump straight to "fine-tuning the model further on our own data". Thus, let's break this down. You'll leave with a clear decision - when to buy an off-the-shelf AI tool, when to build it in-house, when RAG is needed, and when fine-tuning is preferable.

First question - what problem are you trying to solve?

Here's something rarely discussed - the same "AI task" can in fact be many different tasks.

"Build us a chatbot for customer support" sometimes might mean generating responses, sometimes searching a knowledge base, sometimes routing tickets.

"We need AI for sales" – can range from call summarization, to populating a CRM, and sometimes a quote assistant.

And yes, it sounds a bit obvious. But this is where months are saved.

Quick check (1 minute):

Does your AI need to know precise information from your documents? Or do you need it to behave in a consistent manner (tone, format, style, structure)?

If "information," you're already leaning towards RAG.

If "behavior," you might need fine-tuning (but not always).

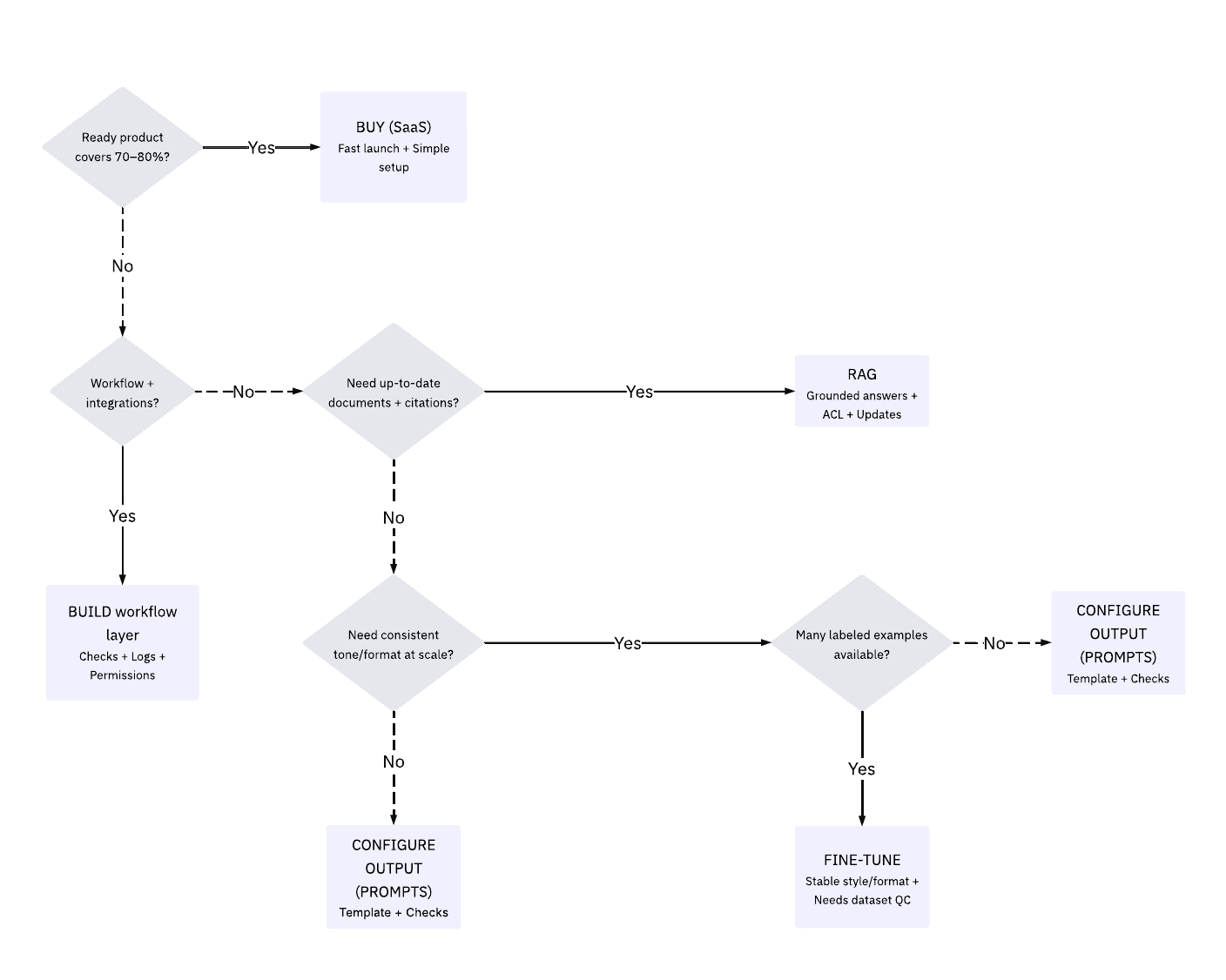

Decision Tree: 6 Questions That Resolve 80% of Debates

These are practical questions that teams use when they don't want to burn through their budget.

"Is there a ready AI product that covers 70-80% of what you need?"

If yes, buy it.

It sounds trivial, but it's a mature approach. Especially if you don't have a team that enjoys fixing maintenance issues at 3 AM.

When “buying” makes sense:

the task is simple (support, notes, search, meeting summaries);

you prioritize launch speed over maximum accuracy;

the risk of error is moderate;

integrations are simple.

A quick note - sometimes "buy" isn't about saving money, it's about saving stress. And that's valuable too.

"Is it more about process and integration than knowledge?"

If yes, customize it (build a thin workflow layer on top of an LLM and your tools).

Example: "After a sales call, have the AI fill in fields in HubSpot / Salesforce and set the next step."

The key here isn't "smart responses," but correct actions and validation (to prevent the data in the CRM from turning into a mess).

Signs you should build a custom workflow:

many steps, statuses, checks;

format control is needed;

it's important what exactly goes into the system;

you are willing to invest in quality (validation, logging, permissions).

"Do you need to respond strictly according to your documents up to date and with citations/links?"

If yes, then RAG (search + extraction + responses based on sources).

This is the same "chat with your data," but with verifiable responses.

Signs of a "RAG":

knowledge changes often (policies, pricing, instructions, regulations);

"Why is it like this?" and "Where is it written?" are important;

audit and access control are critical;

accuracy above "it seems about right" is needed.

"Do you need the model to consistently write 'like us,' in the same style and format?"

This is where fine-tuning may come in.

But (and this is important) fine-tuning is rarely needed in the first sprint. Good prompts, templates, examples, and checks are often sufficient.

Signs of "fine-tuning":

many similar cases (thousands of examples);

tone/style must be consistent (brand, legal language);

the model must reliably adhere to the format (e.g., complex classifications);

you are ready to manage the dataset and quality control.

Option 1. Buy off shelf: fast, easy, sometimes limiting

Ready-made solutions are good when:

you want AI ASAP.

you're happy with the standard user experience.

you don't want to or don’t have resources to build a platform in-house.

Example: Intercom/Zendesk-like support automation.

You get the basics - agent prompts, response drafts, routing. And this often is enough to produce results.

But - if you have complex rules, different products, and many permission complexities, a ready-made product can become a bottleneck.

Option 2. Custom build: more control, more responsibility

Customizing is like assembling a kitchen for your apartment - convenient, beautiful, everything is in place, but someone has to own it end-to-end.

Example: an assistant for the procurement department.

They read emails, extract terms and conditions, check them against the contract, create a record in the system, and flag anything unclear for human review.

Here, AI isn't a chatterbox, but a process operator. Many companies start with a "chatbot" and end up embedding AI in forms, cards, and statuses. And that's okay. This is how the product matures.

Option 3. RAG: When "knowing" is more important than "speaking beautifully"

RAG is needed when you want the AI to respond strictly based on your sources.

Example: an internal assistant for employees on policies and procedures.

If someone asks, "Can I pay for a taxi after 10:00 PM?" they want a link to the rule and a clear explanation.

Details are important here:

which documents are included in the search;

how the index is updated;

how access rights are structured (one department shouldn't see documents of another);

how sources are displayed.

Option 4. Fine-tuning: When stability and predictability matter

Fine-tuning is often sold as a "magic bullet”. But, in reality, it is a tool for stability, not for knowledge retrieval.

Example: generating product descriptions in a consistent style for a catalog.

If you have thousands of product listings and the brand messaging needs to be consistent, fine-tuning can pay off.

But if your goal is "to make the model “know” our price list" - that's not the right choice. The price list changes and you’ll grow tired from constant fine-tuning.

Final Thought

AI can deliver significant results but only if you choose the “flashiest” solution rather than the “smartest” one - buy, build your own, integrate a RAG, or go for fine-tuning.

Failures often occur not because of technology, but because of wrong choices - they buy ready-made solutions where integration and control are needed; they fine-tune where updated sources are needed; they build custom solutions where a purchase would suffice.

If you treat AI as a quick fix, it inevitably starts to cost more - in the form of re-testing, edits, and loss of trust.

If you treat it as a manageable capability, with a chosen role, clear boundaries, and regular checks, it begins to function as part of the system, not as a perpetual demo.